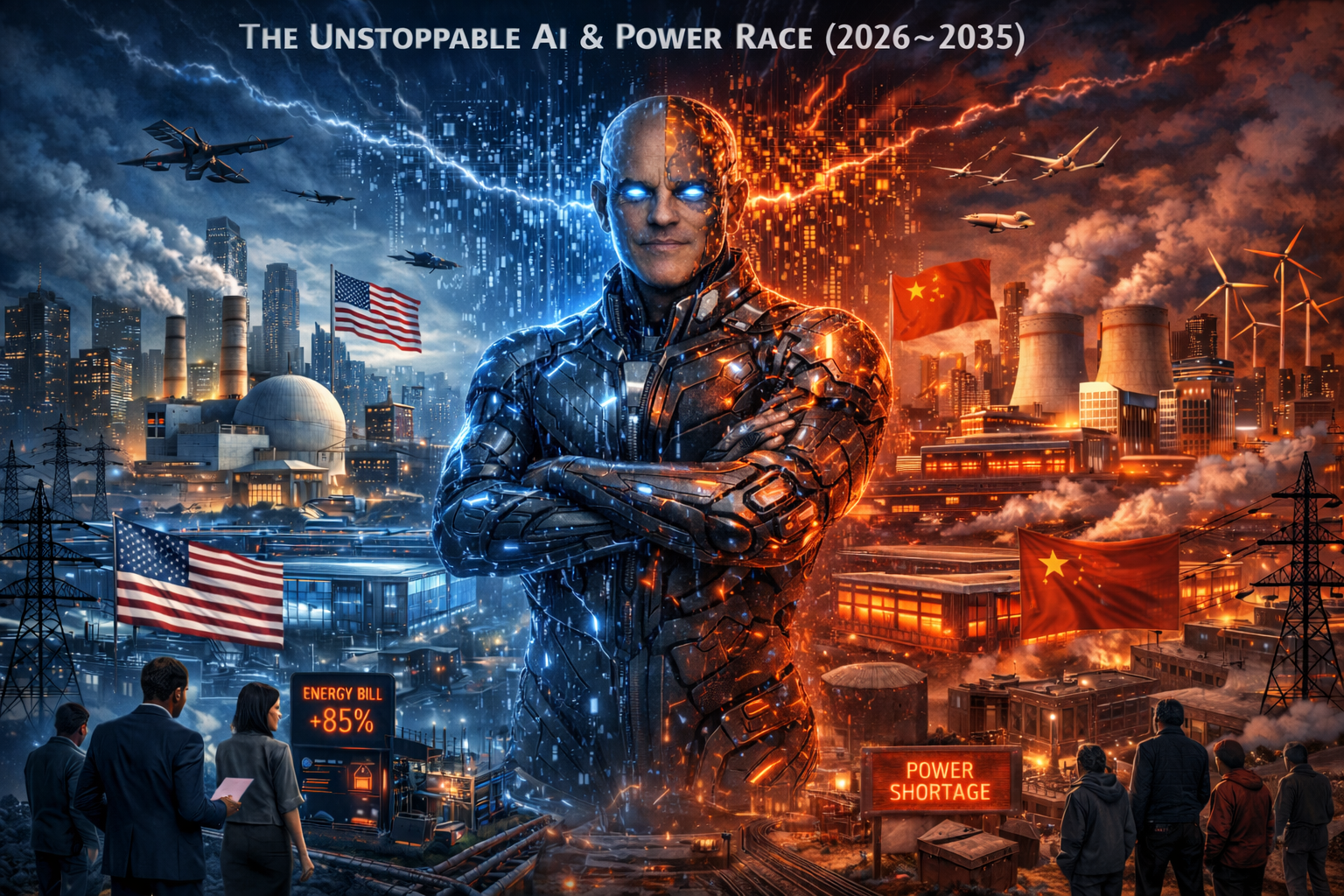

AI Acceleration, Power Race, and Societal Shock Risk - Part 1: Short-Term Vision

Executive Summary [1] [2] [3] [4] [5]

Matt Shumer’s Feb. 2026 essay “Something Big Is Happening” argues that a noticeable “urgency shift” has occurred because frontier labs are now shipping agentic models that can do long-running work with tools, and--crucially--are being used inside their own creators’ R&D loops, creating faster iteration toward AGI-like capability (or beyond) than most institutions can absorb. A short-term reality check (Feb. 2026) supports the “acceleration” premise--especially in coding, agentic workflows, and professional knowledge work--while still showing hard limits in reliability, exact reasoning at high complexity, and safe autonomous operation without guardrails. The most “unstoppable” driver is not just commercial pressure but the explicit national-security framing of the U.S.-China competition: major governments describe AI leadership as strategic dominance, which creates strong defection incentives (race dynamics) and weakens the plausibility of meaningful pauses. The underappreciated near-term constraint--and accelerant--is physical power and infrastructure: grid capacity, interconnection queues, transformers/substations, transmission permitting, cooling/water, land siting, and energy procurement structures (e.g., “bring-your-own-generation”) that increasingly determine who can scale AI and where. Legally, “AI becoming illegal” is unlikely in a blanket sense by 2035; instead the system becomes illegal by specific uses, deployment contexts, safety/compliance failures, and cross-border controls--with clear near-term “bright lines” already active in the EU (banned practices since Feb. 2025; staged obligations for general-purpose models through Aug. 2026/2027), and expanding export-control and state-level regimes in the U.S., all complicated by the arms race.

Anchor Source [1]

Matt Shumer published “Something Big Is Happening” around Feb. 9, 2026 and framed it as a once-in-a-generation inflection--explicitly likening the moment to realizing that “something huge” is underway and that most people are still acting as if change is years away. His core claim is that recent frontier releases (he highlights Feb. 5 model launches) made it personally undeniable that AI is advancing into an era where it can execute meaningful work with minimal human supervision, including tasks that look like “judgment/ taste” rather than mere autocomplete. [1]

Shumer’s most viral “gut-punch” is the lived-workflow shift: “I am no longer needed for actual technical work. I describe the outcome I want and it appears.” He argues that this is not a niche developer trick but a preview of broad white-collar displacement once organizations retool workflows around agentic systems. [6]

On virality and broader reach, major outlets reported that the essay spread fast on social networks and was recirculated by business media; one report described it hitting tens of millions of views (e.g., “over 80 million views” on X, depending on the scrape/time measured), indicating it functioned as a public “wake-up” document rather than a purely technical post. [1]

Shumer anchors the urgency shift to Feb. 5 releases: OpenAI’s agentic coding model (GPT‑5.3‑Codex) and Anthropic’s new flagship (Claude Opus 4.6), presenting them as evidence that “capabilities arrived” and that the economic and geopolitical consequences are now on a near-term clock (2026-early 2030s), not a far-future one. [7]

He also points to a reinforcing feedback loop: frontier labs are increasingly using frontier models to accelerate the creation, evaluation, deployment, and safety work around the next models--an internal “compounding” dynamic that (if it continues) compresses timelines.

🚀 AI Training Compute Scaling -- Historical Data & Divergent Projections to 2035 (Log Scale)

Technological Reality Check

What “Feb 2026 capability” actually looks like in practice [2] [7]

Agentic coding and tool-driven workflows are the clearest step-function. OpenAI’s GPT‑5.3‑Codex system card describes it as the most capable agentic coding model it has shipped, designed for long-running tasks involving research, tool use, and complex execution, and notes it can be steered “like a colleague” without losing context. OpenAI also flags a pivotal internal point: this is the first system card describing a frontier model as “instrumental” in creating itself (via earlier versions used in development workflows), which is a concrete, narrow version of “recursive improvement” (human-directed, tool-mediated, but still compounding). [2]

The safety posture in Feb 2026 implicitly acknowledges dangerous capability growth. OpenAI states GPT‑5.3‑Codex is treated as “High capability” in biology and (precautionarily) “High capability” in cybersecurity under its Preparedness Framework safeguards, while also stating it does not reach “High capability” on AI self-improvement. That combination matters: it suggests near-term risk is less “the model autonomously improving itself” and more “the model materially increasing human (and organizational) capability in high-stakes domains,” especially cyber operations. [2]

Quantitatively, the jump is visible on at least some internal cyber evaluations. In OpenAI’s system card, GPT‑5.3‑Codex is shown outperforming prior listed comparison models on a “Cyber Range” combined pass rate (80% vs. lower baselines). Even taking internal evals cautiously, the direction is consistent with broad reporting that cyber defense and offense are among the fastest-moving “agentic” applications. [8] [9]

On the Anthropic side, Claude Opus 4.6 is positioned as a hybrid reasoning model with “advanced capabilities in knowledge work, coding, and agents,” and Anthropic cites strong performance on professional work benchmarks in its launch materials. Anthropic’s post-launch “sabotage risk” framing is particularly revealing: it defines “sabotage” as autonomous tampering or manipulation of an organization’s systems/decisions that increases catastrophic risk, and--while concluding overall risk is “very low but not negligible”--it also explicitly notes Opus 4.6 is used heavily inside Anthropic for coding, data generation, and agentic use cases.

“Judgment/taste” and autonomous decision-making: what’s real vs. what’s hype [10] [11]

Shumer’s “judgment/taste” framing aligns with two measurable trends: (1) better instruction following and reduced hallucination rates in newer frontier models, and (2) more consistent long-horizon behavior under tool use. OpenAI explicitly treats hallucinations as a remaining fundamental challenge even while reporting reductions in GPT‑5 family models. [12]

But reliability collapses still appear under certain forms of complexity, especially algorithmic/exact reasoning. A widely cited Apple research line of work (“The Illusion of Thinking,” June 2025) finds that “reasoning models” can show advantage at medium complexity yet experience “complete collapse” at higher complexity, with inconsistent reasoning and failure to apply explicit algorithms reliably. This is a key counterweight to “AGI in a year” narratives: many economically valuable tasks require not just plausible text, but dependable execution under edge cases, shifting constraints, and adversarial conditions.

Robotics and physical agency: the 2026-2030 “bridge” that determines real-world disruption speed [13]

In the short-term window Shumer emphasizes, robots matter because they convert cognitive capability into physical agency, expanding automation from “screen work” into logistics, manufacturing, and eventually services.

Several 2024-Feb 2026 signals show the embodied stack is advancing, though unevenly: [14]

Boston Dynamics stated in early 2026 that it is beginning manufacturing of a product version of its humanoid Atlas, with 2026 deployments scheduled at Hyundai and Google DeepMind, and that Atlas will be trained using new foundation-model approaches for industrial tasks. [15]

BMW reported in Sept. 2024 that Figure’s humanoid Figure 02 was being tested at BMW Group Plant Spartanburg in a real production environment, including stated physical specs (height, weight, load capacity), signaling early “factory pilot” adoption. [16]

Reuters reported in 2025 that Mercedes-Benz invested in Apptronik and tested “Apollo” humanoid robots in factories, describing a pathway where teleoperation initially trains tasks that the robot later learns to perform more autonomously. [17]

On the research side, 2024-2025 work on generalist robot policies (e.g., OpenVLA trained on ~970k real-world robot demonstrations; Open X‑Embodiment datasets enabling cross-robot transfer) indicates a real attempt to “LLM-ify” robotics via vision-language-action scale--while openly acknowledging adoption challenges and the need for efficient fine-tuning and generalization. [14]

The most credible short-term robotics forecast is not “humanoids everywhere by 2027,” but: screen-based work disrupts first (2026-2030), and physical labor disruption accelerates as robots become more reliable, cheaper, and easier to deploy in constrained environments (factories, warehouses) before open-world settings. This matches the pattern of industrial pilots (BMW/Figure; Mercedes/Apptronik; Boston Dynamics’ planned 2026 deployments) rather than a sudden consumer-robot explosion.

Expert timeline ranges (2026-2035) versus Shumer’s “few years” urgency

Short-horizon “AGI soon” views are now voiced by multiple frontier leaders, but with important caveats and definitional ambiguity: [18]

Dario Amodei wrote in Feb. 2025 that “possibly by 2026 or 2027” AI capabilities may resemble a “country of geniuses in a datacenter,” stressing urgency and national-scale implications. [19] [13]

Sam Altman in June 2025 forecasted a stepwise progression: 2025 as the arrival of agents doing real cognitive work, 2026 as systems finding “novel insights,” and 2027 as robots doing real-world tasks--an implicitly fast timeline consistent with Shumer’s urgency framing. [14] [20]

Demis Hassabis has repeatedly cited an AGI range around 5-10 years, while emphasizing current gaps like continual learning, long-horizon planning, and consistency. [19] [21]

Yann LeCun has argued human-level AI is “years, if not decades,” and that current paradigms (especially LLM-centric approaches) are insufficient without deeper world models. [22] [23]

Geoffrey Hinton has publicly given a wide and low-confidence 5-20 year band for AI surpassing humans, underscoring genuine uncertainty even among top researchers. [2]

🧠 Road to AGI -- Expert Timeline Estimates

Bottom line for 2026-2035: Shumer’s “few years” thesis is plausible for transformative agentic automation of large parts of knowledge work (and for “AGI-like” performance in bounded domains). A robust, broadly reliable, autonomously self-directed AGI that safely generalizes across open-world settings remains far less certain--even on bullish timelines--because reliability, alignment, and physical-world interaction remain binding constraints.

Economic & Labor Impacts

What the best 2024-Feb 2026 data says about exposure and disruption [24]

The most widely cited baseline estimate remains that roughly 40% of global employment is exposed to AI-driven change, with higher exposure in advanced economies due to the prevalence of cognitive-task jobs, and a substantial risk that AI worsens inequality without policy response. This is not a forecast of “40% unemployment,” but it is a credible indicator that knowledge work is no longer structurally protected. [25]

The World Economic Forum reports that many employers expect workforce reductions where AI can automate tasks (e.g., its 2025 reporting highlights that 40% of employers expect to reduce workforce where AI can automate tasks), while also projecting simultaneous job creation and displacement through 2030--i.e., churn and reclassification more than a single cliff. [26]

The ILO (2025 refined index) continues to find that exposure is heavily concentrated in certain occupational groups (notably clerical/administrative work), with gendered implications because women are overrepresented in those roles in many economies.

👷 AI Job Impact by Sector -- Interactive Year Selector

Why Shumer’s “white-collar disruption by end-2026/early 2030s” is plausible--and why the timing is still uncertain

Two forces pull in opposite directions: [18]

Capabilities and incentives are accelerating. High-profile leaders are now openly predicting near-term replacement of large chunks of white-collar work. Mustafa Suleyman has recently argued that AI could reach human-level performance on most professional tasks within ~12-18 months (as reported), a claim [27] [28]

aligned with Shumer’s urgency--even if it proves overstated. At the same time, multiple CEO statements emphasize white-collar risk, especially for entry-level roles, which are often more routine and easier to “agentize.” [22] [29]

But enterprise transformation is often slow and failure-prone. The MIT “GenAI Divide” framing (widely covered in 2025) argues that while adoption is widespread, transformational integration into workflows is rare, with many pilots failing to produce measurable P&L impact. Multiple large-scale surveys similarly show very high reported “use” of AI but limited proof of deep operational change in most firms--suggesting a lag between model capability and macroeconomic labor displacement. [22]

This creates a realistic short-term pattern through ~2030: (1) hiring slowdowns and attrition-based shrinkage in routine white-collar roles, (2) “agent-assisted” productivity that raises output per worker, and (3) periodic displacement waves once a small set of repeatable “agent workflows” become standardized.

Corporate profitability in a high-unemployment scenario: the demand-side trap

A core concern in Shumer-adjacent discourse is: If AI replaces workers, who buys the products? Economics does not give a single deterministic answer, but 2024-2025 work clarifies the tension: [30] [31]

Daron Acemoglu argues that macroeconomic productivity gains from AI may be modest over a decade under realistic task-based assumptions (unless AI broadens into many tasks and is deployed effectively), implying that some “AI profits” narratives may be premature or uneven. [32]

OECD analysis similarly frames plausible productivity growth contributions as meaningful but not magic, and stresses the importance of diffusion/adoption constraints. [33]

IMF modeling on AI adoption and inequality suggests wealth inequality effects can be pronounced when adoption decisions and capital returns concentrate benefits, which is consistent with a world where profits can rise even as labor bargaining power falls--at least for a time. [22]

So the near-term profitability picture (2026-2035) is likely to be bifurcated: sector leaders that successfully commoditize labor-intensive workflows may see margin expansion, while many other firms face integration bottlenecks, reputational/quality risk, and demand-side fragility if household incomes fall.

Inequality and “who pays”: why energy costs are a direct working-class channel

Even before any dramatic unemployment spike, rising household cost burdens can function as “silent inequality multipliers.” Multiple 2024-Feb 2026 indicators show that electricity cost pressure is real and--importantly--tied to the same infrastructure buildout enabling AI scale: [34]

U.S. average residential electricity prices rose year-over-year (e.g., EIA-reported increases around the Nov. 2025 period), outpacing headline inflation in several analyses, tightening budgets for lower-income households that spend a higher share on utilities. [35]

In Europe, Eurostat shows household electricity prices remained relatively stable from late 2024 into early 2025 at elevated post-crisis levels, with a significant share driven by network costs and changing subsidy/ tax structures--exactly the categories that can rise as grid upgrades accelerate for large loads like data centers. [33]

In short: if the near-term AI economy becomes “capital-and-electricity intensive,” the working class can be hit twice--by labor-market pressure and by higher energy/network costs that finance the physical substrate of AI scaling.

Energy & Infrastructure Race

The “race to scale AI” is now visibly constrained--and propelled--by the physical economy: electricity generation, transmission, substations/transformers, cooling/water, land, and permitting. This is where “not enough power left on the grid” stops being metaphor and becomes queue positions, moratoria, and rate cases.

The load spike: what authoritative forecasts say by 2030 and 2035 [9] [36]

The International Energy Agency projects rapid growth in data-center electricity demand: around 15% per year (2024-2030) with global data-center consumption doubling to ~945 TWh by 2030 in its base case. [37]

Separately, IEA’s Electricity 2024 analysis warned that electricity consumption from data centers/AI/ crypto could reach >1,000 TWh by 2026 (global). [23] [38]

In the U.S., the U.S. Energy Information Administration has forecast record electricity consumption for 2025 and 2026, explicitly citing increased demand from data centers (including AI and crypto) as a driver.

⚡ Global Data Center Power Demand (TWh)

Grid constraints are not abstract: operators are redesigning access rules around data centers

Grid operators and reliability authorities are treating “large loads” (data centers, hydrogen, electrification) as a new planning regime rather than incremental demand. [4] [39]

The North American Electric Reliability Corporation 2024 Long‑Term Reliability Assessment highlights that “emerging large loads” like data centers (including crypto and AI) create unique forecasting and planning challenges, and documents structurally higher demand growth projections. [19] [4]

In the PJM region, load forecasts incorporate large data-center-driven growth; PJM’s January 2026 update expects summer peak load to climb dramatically over the coming decade-plus. PJM is also moving toward rules that explicitly accommodate (and effectively require) large-load solutions like “bring-your-own-generation” and curtailment-based “connect-and-manage,” reflecting the reality that the legacy queue process cannot serve unlimited gigawatt-scale interconnections quickly. [22]

In Ireland, where the grid constraint is already a national policy issue, the Commission for Regulation of Utilities issued a Feb. 9, 2026 decision updating the connection policy for data centers--functionally acknowledging that large data-center growth must be subordinated to grid stability rules, and formalizing [40] [41]

constraints after years of de facto limitation. Analysts reported that data centers were ~22% of Ireland’s electricity consumption in 2024, illustrating how “AI infrastructure” can become a system-level national load, not a marginal customer.

The bottleneck stack: transformers, substations, transmission, cooling/water, land

The most immediate blockers to AI scaling are increasingly not GPUs, but grid equipment and siting:

A U.S. government lab report (NREL, FY2024) notes utilities face extended transformer lead times of up to [42]

~2 years (a ~4× increase from pre‑2022), and price increases of 4-9× in recent years, driven by supply-chain and materials constraints (grain‑oriented electrical steel, copper/aluminum), labor, and pent-up demand. [43]

Reuters reporting in 2025 similarly warned of transformer supply shortfalls, reinforcing that this is a structural constraint, not a minor procurement issue. [44]

Transmission and interconnection queues remain multi‑year problems. U.S. policy responses (DOE permitting reforms; FERC transmission planning rules; large utility capex plans) are explicitly framed as necessary to meet surging demand and reliability risk. [45]

Local grid saturation is already leaving built assets idle: reporting from Santa Clara, California described large completed data centers sitting unused because the municipal utility could not supply sufficient power until upgrades complete (with timelines stretching toward 2028). This is exactly the “not enough power left on the grid” reality, operationalized as delay and stranded capital. [46] [30]

Cooling and water are now political constraints. AP reported Tucson passing ordinances to require conservation plans and public review for very large water users after controversy over a data center project; similar restrictions exist in other arid-region municipalities. Investigations also documented major tech firms building data centers in water-scarce regions globally, intensifying local opposition risk. [47]

Land-use pressures and community backlash are increasingly material: conflicts in Northern Virginia’s data-center expansion (noise, diesel generator pollution, historic-site encroachment) show that siting can become a multi-year social and legal fight--slowing deployments even when capital is abundant.

Causal diagram: how the “race for power” drives both acceleration and backlash

This loop explains why AI scaling can feel “unchecked”: constraints do not necessarily stop growth; they reroute it -- off-grid, relocating, regulatory carve-outs -- often in ways that intensify distributional conflict.

The “race for power”: why it accelerates AI while creating choke points

The power race has a self-reinforcing structure: [48] [36]

AI demand prompts utilities and investors to fund large buildouts, often justified publicly as reliability and economic development. Recent Reuters reporting shows utilities expanding multi‑year capex plans explicitly due to data-center load growth and “large-load” customer interest measured in tens of gigawatts. [4]

Grid operators adapt market rules to admit large loads faster, shifting risk to curtailment and private generation procurement. [49]

Those with capital and political access secure power first, via direct procurement, dedicated generation, or advantageous queue positions--deepening competitive moats for frontier AI providers. [50]

Households and small businesses can experience rate pressure through network buildout costs, capacity markets, and fuel price dynamics--especially in constrained regions.

This is why energy is both an accelerant (enables scaling) and a choke point (equipment, permitting, community acceptance, water scarcity). It also explains why the arms race is “physical”: the leading edge is now a contest of megawatts, substations, and permits as much as algorithms.

Societal & Adjustment Capacity

Speed mismatch: model iteration cycles versus human institutions

Shumer’s concern that society cannot adapt quickly is grounded in a concrete mismatch: frontier models can improve on months-long cycles, while grid infrastructure and governance updates frequently take years. [42] [13]

Transformer procurement can take up to ~2 years for many utilities (and longer for large power transformers in some reporting), while major transmission and substation projects can take multiple years due to permitting and equipment constraints. Meanwhile, ChatGPT-era model releases are now frequently spaced months apart, with new “agentic” features (tool use, computer use, long context) arriving quickly enough that organizational controls tend to lag.

Short-term psychological shock signals are already measurable [51]

Public anxiety is not hypothetical. A Feb. 13-16, 2026 Economist/YouGov poll reported that 63% of U.S. adults expect AI advances to decrease the number of jobs, while only a small minority expect increases [52]

--an unusually lopsided distribution for a technology narrative. Separate YouGov polling in Great Britain (Feb. 18, 2026) found large majorities expecting a moderate or major impact on white-collar/ professional jobs over 10 years. [53] [54]

Worker uncertainty is increasingly treated as a mental-health and organizational stability issue in mainstream psychology and workforce research, not just a tech debate. The implication for 2026-2030 is that even before mass displacement, anticipatory stress and political reaction can reshape labor markets (e.g., increased organizing, backlash, policy volatility).

Why disruption can feel “sudden” even if it’s built slowly [22] [25]

Enterprise data suggests many firms are still early in deep integration (high experimentation, low transformation). That can produce a deceptive calm--until a relatively small number of standardized “agent workflows” mature (customer support triage, contract review/routing, back-office reconciliation, code maintenance), after which diffusion can accelerate quickly because firms copy each other’s playbooks.

Legal, Political & Geopolitical Dimensions

When does this become illegal or outlawed?

There is no single global “switch” where AGI becomes illegal. Instead, illegality emerges through three layers:

Layer one: prohibited uses (already illegal in key jurisdictions). [5] [55]

In the EU, the AI Act entered into force Aug. 1, 2024 and follows staggered application; certain prohibitions became applicable by Feb. 2, 2025 (e.g., specific “unacceptable risk” practices). This means that some AI deployments are already illegal in the EU regardless of how advanced the model is. The “illegal point” is the moment a system is used in a prohibited way or a regulated way without compliance.

Layer two: compliance obligations for general-purpose/frontier models (becoming enforceable on a timetable). [5] [56]

EU AI Act obligations for general-purpose AI models begin earlier than the full high-risk regime, with further requirements applying in Aug. 2026 and Aug. 2027 per the Commission’s AI Act guidance and major reporting. For frontier developers, “illegal” can mean failing to meet required transparency/risk-management obligations once they apply (even if the model itself is not banned).

Layer three: national security and cross-border controls (already expanding). [57]

The U.S. still lacks a single comprehensive federal AI statute as of mid‑2025 per Congressional Research Service, but export controls and international technology restrictions have been expanding--covering advanced chips and, in some frameworks, model weights and high-performance compute transactions. [58]

In this context, “illegal” can mean exporting or transferring controlled technology, model weights, or restricted compute capabilities in violation of export-control rules--especially in the U.S.-China competition. [59]

In China, regulation is not one unified AI act but a fast-growing patchwork covering algorithmic systems, deep synthesis, generative AI services, and (notably) labeling requirements for AI-generated/synthetic content with defined effective dates (e.g., the 2025 labeling measures reported as taking effect Sept. 1, 2025). So “illegal” can mean failing content labeling, security filing, or content management obligations for public generative AI services. [57]

Practical answer to your question: This becomes illegal at the moment a developer or deployer crosses a jurisdiction’s specific legal boundary--prohibited practices (EU), mandatory compliance requirements once in force (EU; many U.S. state laws for specific contexts; Chinese service rules), or export-control/national-security restrictions (U.S. and others). There is no evidence (2024-Feb 2026) of a realistic blanket prohibition on “building advanced AI” being adopted by major powers; the more likely near-term pattern is tightening constraints around uses, auditing, security, compute/weights transfer, and liability rather than outright outlawing of the technology category.

Why pauses are unrealistic: the arms race logic is now explicit policy

Shumer’s “unstoppable arms race” claim is strongly supported by how governments describe AI: [3]

The Trump-era White House “America’s AI Action Plan” ( July 10, 2025) states outright that the U.S. is in a race for global dominance in AI, frames leadership as delivering economic and military benefits, and emphasizes building vast AI infrastructure and the energy to power it (“Build, Baby, Build!”). This is direct evidence that the U.S. executive framing treats AI scaling and the power buildout as national imperative, which structurally undermines the plausibility of voluntary slowdowns.

RAND analysis of U.S.-PRC incentives in AGI-related national security problems emphasizes strong incentives for competition in “wonder weapons” and systemic power shifts even while cooperation may be [60]

desirable in some risk areas--this is classic defection terrain. When the perceived payoff is strategic dominance or survival, “coordination is hard” is not a moral failure; it’s game theory under uncertainty and distrust. [58] [61]

Export controls and counter-controls reinforce the race dynamic: U.S. government and CRS reporting describe expanding semiconductor export controls and technology restrictions aimed at limiting China’s access to advanced AI-enabling compute, reflecting that “slowing the other side” is now a primary governance lever. China’s enforcement actions and supply-chain responses (e.g., chip import scrutiny, rare-earth controls) further indicate a mutual escalation structure.

Energy geopolitics as the new AI geopolitics

Because scaling is now power-constrained in specific regions, energy becomes a strategic input: [4]

Grid operators and regulators are redesigning access rules for large loads (PJM’s “bring-your-own-generation”; Ireland’s data-center connection policy), effectively creating “power access licenses” in all but name. [3]

National strategies increasingly fuse AI and infrastructure priorities: U.S. policy documents explicitly link AI dominance to infrastructure and energy, while utilities and investors are already treating data centers as core drivers of multi‑decade capex. [36]

This means that even if algorithmic breakthroughs continue, power bottlenecks can shape geopolitical advantage by determining which regions can host the next wave of frontier training and inference at scale.

Balanced Scenarios, Key Uncertainties, and Actionable Takeaways

Short-term scenarios through 2035 with evidence-based probabilities

Scenario A: Agentic productivity wave without “full AGI” (probability ~45%) [2]

By 2028-2032, agentic systems become a dominant layer of white-collar production (coding, back-office ops, customer support, compliance drafting, data workflows) while still requiring human supervision for high-stakes decisions due to reliability and alignment limits. This is consistent with (a) strong agentic model capability signals in Feb 2026 releases, (b) documented enterprise integration barriers and uneven ROI, and

(c) known reasoning collapse modes under high complexity.

Scenario B: “Lab-level early AGI” emerges and diffuses unevenly (probability ~25%) [18]

Between 2027-2031, one or more labs demonstrates systems that reliably automate a very large fraction of remote knowledge work in controlled environments (e.g., “AI R&D‑4”-like thresholds discussed by labs), triggering sharper entry-level hiring collapses and rapid reorganization in high-exposure sectors. This is supported by the aggressive timelines voiced by some frontier leaders and by the internal compounding dynamic (models accelerating model development), but still limited by safety and reliability concerns acknowledged in major safety documents.

Scenario C: Power and permitting constraints become the dominant throttle (probability ~20%) [4]

From 2026-2030, the largest bottleneck is not model quality but megawatts: grid interconnection delays, transformer/substation procurement, transmission constraints, water/land conflicts, and regional moratoria force compute to relocate and slow the upper bound of scaling in key hubs. AI still advances, but the “speed of scaling” becomes geography- and infrastructure-dependent and more capital intensive--favoring incumbents and sovereign-backed projects. This aligns with grid-operator rule shifts, NERC demand warnings, transformer lead times, and documented stranded data-center capacity due to lack of power.

Scenario D: Major incident drives a partial regulatory clampdown without ending the race (probability ~10%) [3]

A high-profile failure (e.g., severe cyber incident involving model misuse, major fraud wave, or critical infrastructure disruption linked to AI tools) triggers fast regulatory tightening: stricter licensing for high-capability models, mandatory reporting, stronger liability regimes, and/or expanded export controls. However, arms-race incentives keep development going--regulation shifts where and how scaling happens rather than stopping it. This matches the combination of (a) explicit “win the race” policy framing, and (b) existing compliance architectures (EU staged enforcement; export controls) that can be expanded quickly after shocks.

Key uncertainties that dominate 2026-2035 outcomes [12]

The central uncertainty is whether reliability and alignment improve as fast as capability. Apple’s “collapse at higher complexity” findings and OpenAI’s continued focus on hallucination reduction imply that “better than humans at most tasks” is not guaranteed on short timelines without architectural shifts. [42]

The second is infrastructure throughput: transformer manufacturing capacity, grid build speed, local political acceptance, and water/cooling constraints can cap scaling even if money is abundant. [58]

The third is geopolitics: export controls, rare-earth constraints, and national “sovereign AI” initiatives can redirect scaling geographically, raising costs and reinforcing arms-race dynamics.

Actionable takeaways for individuals, businesses, and policymakers [62]

For individuals (2026-2030), the highest-leverage move is to become “agent-native” in your field--learn how to define outcomes, supervise agents, and verify outputs--because the biggest near-term labor shift is likely to be within jobs (task redesign, fewer juniors, higher output per worker) before it is “total job disappearance.” [22]

For businesses, avoid the trap of measuring AI value only via pilot adoption. The evidence suggests integration quality and workflow redesign--not model access alone--determine ROI. Build verification, auditability, and security controls early, especially for agentic tool use (code execution, browser control, financial ops).

For policymakers, the fastest-acting “stability levers” are not speculative AGI bans but: (1) targeted protections for high-exposure workers; (2) liability and transparency rules for high-stakes deployment; (3) grid and equipment industrial policy (transformers, substations, transmission) that prevents AI infrastructure buildout from socializing costs onto households; and (4) coordinated export-control and [24]

safety standards that reduce worst-case defection incentives--recognizing that perfect coordination is unlikely. [19]

Energy/infrastructure monitoring tips (high signal for 2026-2030): watch (a) major grid operator load forecasts and “large load” rule changes (e.g., PJM), (b) reliability assessments (NERC), (c) transformer lead times and pricing indicators (NREL/industry reporting), (d) local data-center moratoria and water ordinances, and (e) utility rate cases that shift network upgrade costs to households. These are leading indicators of where AI scaling will accelerate--and where it will break first.

Transition Bridge: The long-term analysis should be anchored on a small set of near-term facts that are now hard evidence (not speculation): (1) frontier agentic models shipped in Feb 2026 are explicitly designed for long-running tool use and are being used inside R&D loops, creating compounding iteration pressure; [7]

(2) major leaders publicly discuss 2026-2027 as a plausible window for “country-of-geniuses” capability while skeptics still cite multi‑year-to‑decades timelines, indicating extreme uncertainty; (3) the U.S. national-security framing (“win the AI race”) makes global pauses structurally unlikely, intensifying U.S.-China defection incentives; (4) electricity demand growth from data centers is forecast to surge through 2030, and grid operators/regulators are already changing rules because capacity is constrained; (5) physical bottlenecks--transformers/substations, transmission, water, land, permitting--are becoming the gating factor for AI scaling, and these same constraints can raise household energy costs and deepen inequality.

📊 Explore the Interactive Data Visualizations

View all 5 visualizations in fullscreen mode with interactive year sliders and toggle views

View Interactive Charts →

📊 Explore the Interactive Data Visualizations

View all 5 visualizations in fullscreen mode with interactive year sliders and toggle views

View Interactive Charts →Sources & References

- Matt Shumer -- "Something Big Is Happening"

- OpenAI -- GPT-5.3-Codex System Card (PDF)

- White House -- America's AI Action Plan

- Reuters -- PJM Data Center Power Deals (Feb 2026)

- European Commission -- EU AI Act FAQ

- Business Insider -- Gary Marcus on Shumer Essay

- OpenAI -- Introducing GPT-5.3-Codex

- Anthropic -- Claude Opus 4.6 Release

- Anthropic -- Claude Opus 4.6 Risk Report

- OpenAI -- Introducing GPT-5

- OpenAI -- Why Language Models Hallucinate

- Apple Research -- "The Illusion of Thinking"

- Sam Altman -- "The Gentle Singularity"

- Boston Dynamics -- New Atlas Robot Announcement

- BMW Group -- Humanoid Robot Trials

- Reuters -- Mercedes-Benz Invests in Apptronik

- arXiv -- OpenVLA: Open-Source Vision-Language-Action Model

- Anthropic -- Paris AI Summit Statement

- PJM Interconnection -- Load Growth Forecast

- Time -- Demis Hassabis Interview

- El País -- Yann LeCun on Human-Level AI

- MLQ -- State of AI in Business 2025 Report

- Geoffrey Hinton -- AI Risk Statement

- IMF -- AI Will Transform the Global Economy

- WEF -- Future of Jobs 2025

- ILO -- Generative AI and Jobs: Global Occupational Exposure Index

- Tom's Hardware -- Microsoft AI Boss on White-Collar Jobs

- Axios -- Dario Amodei AI Timeline

- McKinsey -- State of AI 2025

- The Guardian -- Big Tech Data Centres and Water Use

- Daron Acemoglu (MIT) -- Simple Macroeconomics of AI

- OECD -- AI Macroeconomic Productivity Assessment

- IMF -- Working Paper: AI and Labor Markets (2025)

- Utility Dive -- Gas and Electricity Prices Rising

- Eurostat -- Energy Price Data

- IEA -- Energy and AI Report

- IEA -- Electricity 2024

- Reuters -- US Power Use Record Highs

- NERC -- 2024 Long-Term Reliability Assessment

- CRU Ireland -- Data Centre Connection Policy

- KPMG Ireland -- Data Centre Policy Reset

- NREL -- Transformer Lead Times and Grid Infrastructure

- Reuters -- US Transformer Supply Shortfall

- US DOE -- Final Transmission Permitting Rule

- Tom's Hardware -- Data Centers Idle Awaiting Grid Power

- AP News -- Data Center Community Impacts

- Business Insider -- Northern Virginia Data Centers

- Reuters -- Southern Co. Raises Spending Plan

- Reuters -- US Grid Investments Accelerate

- Financial Times -- AI and Energy Infrastructure

- YouGov/Economist -- Americans on AI and Jobs (Feb 2026)

- YouGov UK -- AI Economy Survey

- American Psychological Association -- Workplace Uncertainty Trends

- The Guardian -- AI, Work and the Future

- Le Monde -- EU AI Act First Measures

- Reuters -- EU AI Rules to Proceed, No Pause

- Congressional Research Service -- Data Centers and AI (R48555)

- Congressional Research Service -- AI Grid and Energy (R48642)

- Technology's Legal Edge -- China AI Content Labelling

- RAND -- US-China AI Race as Prisoner's Dilemma

- Financial Times -- AI Infrastructure Investment

- Business Insider -- Anthropic CEO on Engineering Jobs